MySQL to TiDB Migration: Streaming 100 Billion Records in Real Time

A payment service needed real-time streaming AND historical data transformation across 40 MySQL tables into one TiDB table. Xstreami delivered 100 billion records migrated with 0% data loss, complex business logic — zero lines of code written.

Divine Steve March 31, 2026

The Challenge: real-time Streaming + 100 Billion Historical Records

A leading payment service provider came to Xstreami with a primary goal: set up a real-time data streaming pipeline so every transaction in their MySQL databases would instantly flow into a high-performance TiDB analytics layer. Standard streaming — or so it seemed.

Then came the full picture. Their system held over 100 billion historical payment records spread across 40 MySQL tables — years of transactions, merchant profiles, fraud logs, reconciliation data, settlement records, and more. This historical data could not be left behind. It needed to be transformed with the same complex business logic as the live stream and loaded into TiDB before go-live. And there was one non-negotiable requirement: absolute zero data loss.

Every new payment transaction — INSERTs, UPDATEs, DELETEs — had to be captured from MySQL in real-time, transformed on-the-fly with complex business logic, and delivered to TiDB in under 100 milliseconds.

100 billion+ existing records from 40 fragmented MySQL tables needed to be read, enriched, transformed through multi-layer business logic, and loaded into a single consolidated TiDB table — with guaranteed zero data loss.

The operational challenges driving this transformation were severe:

- ● Query Performance Collapse: Joining 15–20 MySQL tables for a single analytics query took 5–10 seconds during peak hours — unacceptable for a real-time payment environment

- ● Massively Complex Transformation Logic: Business rules spanned fee calculations, currency conversions, tiered risk scoring, AML compliance flags, merchant-level settlement adjustments, and dynamic fraud thresholds — all needing to apply consistently to both historical and live data

- ● Zero Tolerance for Data Loss: In a regulated payments environment, even a single missing record means compliance failure, reconciliation errors, and potential financial liability. Data accuracy had to be 100%

- ● No Production Disruption: The migration of 100B+ historical records had to run in parallel with live transaction processing — MySQL could not be taken offline, and live streaming could not be delayed

- ● No Custom Code Acceptable: Engineering resources were already stretched. The team needed a platform that business analysts could configure and update without writing or deploying code

The core requirement in one sentence: Stream live MySQL transactions to TiDB in real-time while simultaneously transforming 100 billion historical records — all with zero data loss and zero custom code. No traditional ETL tool on the market could do both simultaneously at this scale.

The Xstreami Solution: no code Data Transformation

Enter Xstreami—a cutting-edge real-time data streaming platformthat transforms how organizations handle data consolidation and mysql to tidb migration. For this payment service, Xstreami became the bridge between their legacy MySQL infrastructure and a modern, high-performance TiDB database.

What Makes Xstreami Different?

Unlike traditional ETL tools that require extensive programming and maintenance, Xstreami offers a revolutionary approach to data consolidation:

The Migration Journey: From 40 Tables to 1

The transformation happened in two critical phases: Initial Data Sync (IDS) for historical data migration, followed by continuous real-time streaming for ongoing transactions.

The team used Xstreami's visual interface to map the 40 MySQL source tables to a unified TiDB schema. All transformation logic—data enrichment, field mapping, calculations, and business rules—was configured through the UI without writing a single line of code. The entire configuration took just 2 days.

Xstreami's IDS engine kicked off the massive data migration. Across the migration window, 100 billion payment records were read from MySQL, transformed according to the configured logic, and loaded into TiDB. The parallel processing capabilities ensured minimal impact on production MySQL databases. Progress was monitored in real-time through Xstreami's dashboard.

Once IDS completed, Xstreami automatically transitioned to real-time streaming mode. Every INSERT, UPDATE, and DELETE operation in the MySQL payment tables was captured instantly and streamed to TiDB with the same transformation logic. Latency from source to destination: less than 100 milliseconds.

The team conducted comprehensive validation checks comparing MySQL source data with the TiDB destination. Xstreami's built-in data quality tools verified record counts, field values, and business logic accuracy, achieving a 100% success rate—fully meeting the standards expected for a payment system.

The payment service cutover to querying TiDB for analytics and reporting while maintaining MySQL for transactional operations. Xstreami continues to run 24/7, streaming every payment transaction in real-time. The no code platform allows the team to modify transformation logic instantly whenever business requirements change.

Deep Dive: The Payment Data Transformation

Let's examine exactly how Xstreami transformed the complex 40-table payment structure into a single, queryable table optimized for high-performance data pipelines.

Source: 40 MySQL Payment Tables

The original MySQL schema was highly normalized across multiple categories:

The Challenge: A typical payment analytics query required joining 15-20 of these tables, resulting in:

- Query execution times of 5-10 seconds during peak hours

- Heavy load on the production MySQL database

- Complex SQL that only senior engineers could write

- Delayed insights due to query performance bottlenecks

Transformation: Xstreami's no code Logic

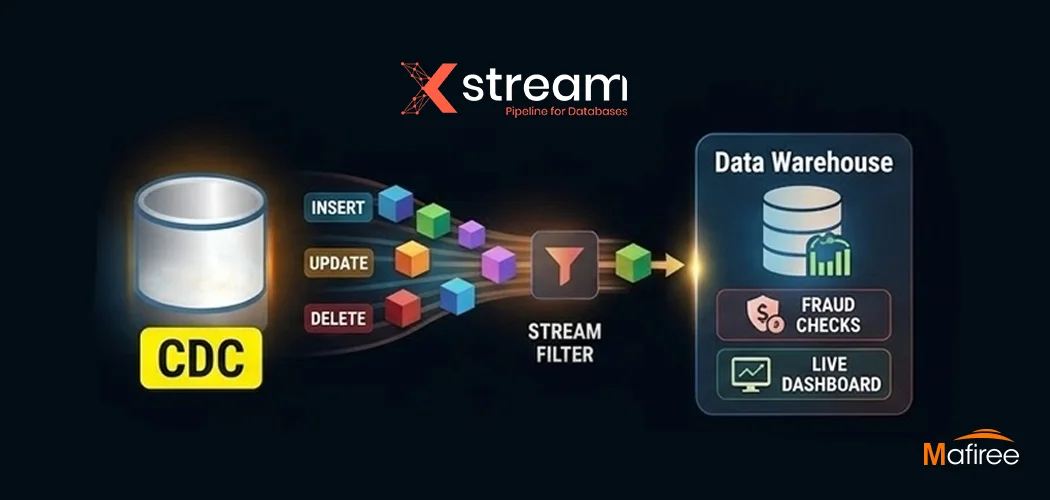

Monitor all 40 MySQL tables for changes using CDC (Change Data Capture). Capture every INSERT, UPDATE, and DELETE operation in real-time.

For each transaction event, perform lookups to enrich data from related tables: merchant details, customer info, payment method, gateway, country, currency, etc.

Map source fields to destination schema. Rename fields for clarity, combine fields where needed, and format data according to business requirements.

Compute derived fields: fees, net amounts, currency conversions, tax calculations, merchant share, risk scores, and business metrics.

Apply validation rules to ensure data quality: check for null values, validate formats, verify referential integrity, and flag anomalies.

Write the enriched, transformed record to the consolidated TiDB table using optimized batch inserts for maximum throughput.

The Power of no code: All of this complex transformation logic was configured through Xstreami's visual interface in less than 2 days. No Java, no Python, no SQL scripts. Just point-and-click configuration with instant deployment.

Destination: Single TiDB Payment Analytics Table

The resulting schema in TiDB is a fully denormalized, analytics-optimized table:

The Result: Simplified Queries

What once required complex 15-20 table joins now becomes:

Performance & Business Impact

The mysql to tidb migration delivered transformative results across all dimensions of the payment service's data infrastructure.

Real Business Benefits

The Bottom Line: The payment service achieved a 10x improvement in data infrastructure efficiency while eliminating the need for complex custom code. Xstreami's no code platform transformed their ability to deliver real-time payment insights at massive scale.

How Xstreami Achieved Zero Data Loss at 100 Billion Record Scale

Transforming 100 billion historical payment records while simultaneously streaming live transactions is not a problem that scales with brute force. It requires an architecture purpose-built for exactness — where every record is accounted for, every transformation is verifiable, and no interruption can cause data loss. Xstreami's Initial Data Sync (IDS) engine was designed for exactly this.

Why IDS Guarantees Zero Data Loss

Xstreami's IDS engine eliminates data loss through layered, redundant guarantees — not just one safeguard, but many:

IDS Performance Highlights:

- → Peak throughput sustained at 15,000+ records per second without impacting live MySQL workloads

- → Automatic recovery from network interruptions — resumes at exact checkpoint, zero duplicate records

- → Parallel processing across all 40 source tables with intelligent rate limiting

- → End-to-end data validation success rate: 100% — every record accounted for

Seamless Transition to real-time

Once IDS completed, Xstreami automatically switched to real-time streaming mode. This transition was completely transparent—no manual intervention required, no service disruption, no downtime.

From that moment forward, every payment transaction in MySQL was:

- Captured via Change Data Capture (CDC) within milliseconds

- Transformed according to the same no code logic configured in the UI

- Streamed to TiDB with sub-100ms latency

- Available immediately for real-time analytics and reporting

The no code Advantage

Perhaps the most revolutionary aspect of this project wasn't the scale or speed—it was achieving all of this without writing a single line of code.

Traditional ETL vs. Xstreami

Single-Click Logic Updates

One of the most powerful features of Xstreami is the ability to modify transformation logic instantly through the UI. Here's a real example from the payment project:

Scenario: The business team decided to change how merchant fees are calculated—from a flat percentage to a tiered structure based on transaction volume.

Traditional Approach:

- Data engineer updates Python ETL script

- Code review and testing (2-3 days)

- Deployment to staging environment

- UAT and validation (1-2 days)

- Production deployment window (off-hours)

- Total time: 1 week minimum

Xstreami Approach:

- Business analyst updates fee calculation rule in UI

- Preview transformation with sample data

- Click "Deploy" button

- Logic goes live instantly, applies to all new transactions

- Total time: 15 minutes

Why no code Matters for Payment Systems

In the fast-paced world of fintech data streaming, agility is everything:

- Regulatory Changes: When compliance requirements change, payment systems must adapt immediately. Xstreami enables same-day compliance updates.

- Product Launches: New payment methods, currencies, or merchant types can be added to the data model without code deployments.

- Business Experimentation: A/B testing different risk models or pricing structures requires rapid data pipeline changes—impossible with traditional ETL.

- Reduced Risk: No code means no code bugs, no deployment failures, no production incidents from typos in SQL scripts.

- Team Empowerment: Business analysts who understand payment logic can make changes directly without waiting for engineering resources.

How Does Xstreami Compare to Other Tools?

When evaluating tools for migrating MySQL to a distributed SQL platform like TiDB, the critical differentiators are whether the tool handles historical data migration and real-time streaming simultaneously — and whether it requires custom code to do it.

Unlike tools that handle either historical migration or real-time streaming — but not both— Xstreami delivers a unified, no code pipeline that covers the entire migration lifecycle without a single line of custom code.

Beyond Payment Systems: Universal Connectors

While this case study focuses on MySQL to TiDB migration, Xstreami's platform offers universal connectivity across 50+ data sources and destinations.

Supported Connectors

Multi-Source Scenarios

Xstreami excels at consolidating data from multiple sources into unified destinations:

- Customer 360: Combine data from CRM (Salesforce), support tickets (Zendesk), orders (MySQL), and product usage (MongoDB) into a single customer view

- real-time Analytics: Stream from operational databases (PostgreSQL), clickstream data (Kafka), and mobile events (Kinesis) into BigQuery

- Hybrid Cloud: Synchronize on-premise Oracle databases with cloud-native services like Snowflake and Redshift

- Event-Driven Architecture: Capture changes from any database and publish to Kafka topics for downstream microservices

The power of universal connectors: Whether you're migrating MySQL to TiDB (like this case study), syncing PostgreSQL to Snowflake, or streaming MongoDB to Elasticsearch—Xstreami provides the same no code experience with enterprise-grade reliability.

Key Takeaways for Payment & Fintech Companies

This real-world payment system transformation demonstrates the transformative potential of modern real-time data streaming platforms. Here are the critical lessons:

The Future of Payment Data Infrastructure: This project proves that modern real-time data platforms can handle the most demanding fintech workloads—100 billion records, 40-table consolidations, sub-100ms latency—all without custom code. This is the new standard for payment data pipelines.

Conclusion: The real-time Data Revolution

This payment service's journey from 40 fragmented MySQL tables to a single, real-time TiDB analytics powerhouse represents more than just a technical migration—it's a fundamental shift in how modern financial services should approach data infrastructure.

The results speak for themselves:

- 100 billion records migrated without disrupting live production operations using Xstreami's IDS engine

- 95% query performance improvement by eliminating complex multi-table joins

- real-time payment insights with sub-100ms latency from MySQL to TiDB

- Zero lines of code written—all transformation logic configured through the UI

- Single-click logic updates enabling rapid business adaptation

- 10x faster time-to-market for new payment features and reports

What makes this transformation truly remarkable is not just the scale or speed, but the accessibility. By providing a no code data transformation platform, Xstreami democratized the ability to build and maintain enterprise-grade data pipelines. Business analysts who understand payment logic can now make changes directly, without waiting for engineering resources.

Looking for a Consulting Firm to Migrate from MySQL to a Distributed SQL Platform?

Mafiree's database consulting team specializes in end-to-end MySQL to TiDB migrations from traditional RDBMS systems to modern distributed SQL platforms. We have executed MySQL to TiDB migrations for payment providers, fintech companies, and high-volume e-commerce platforms across India and APAC — combining certified DBA expertise with the Xstreami platform for a fully managed, zero-downtime migration delivery.

Talk to a Migration Specialist →

What makes this transformation truly remarkable is not just the scale or speed, but the accessibility. By providing a no code data transformation platform, Xstreami democratized the ability to build and maintain enterprise-grade data pipelines. Business analysts who understand payment logic can now make changes directly, without waiting for engineering resources.

What This Means for the Payments Industry

This case study has implications far beyond one payment service:

- For fintech companies: real-time payment data streaming is no longer a luxury requiring massive engineering investment—it's an accessible capability that can be deployed in weeks

- For data teams: The no code approach frees engineers from writing and maintaining repetitive ETL scripts, allowing them to focus on higher-value work

- For business leaders: Faster data pipeline development means faster product launches, quicker regulatory compliance, and more agile responses to market changes

- For the industry: As payment volumes continue to explode and real-time expectations become universal, platforms like Xstreami set the new standard for what's possible

The bottom line: Traditional batch ETL is dead. Custom-coded streaming pipelines are overkill. The future belongs to no code, real-time data platforms that combine the power of enterprise-grade streaming with the accessibility of visual configuration. Xstreami is leading that future.

Is Your Payment System Ready for real-time Streaming? 5 Questions to Ask

Before committing to a data infrastructure overhaul, the right questions matter more than the right tools. Here's how to self-assess whether your payment system has the same structural bottlenecks this case study solved:

- How complex are your analytics queries?

If your queries involve multiple joins across several tables, your system is likely optimized for transactions, not analytics. Complex joins increase query time and reduce performance.

In many cases, teams rely on 10–20 table joins, leading to slow reports and heavy database load. A denormalized, analytics-ready structure can simplify queries and dramatically improve performance. - How fresh is your data?

If your data has even a 15–30 minute delay, your business decisions are always behind reality.

real-time systems powered by CDC (Change Data Capture) enable sub-100ms latency, ensuring your dashboards, alerts, and decision engines operate on live data — not outdated snapshots. - How quickly can you adapt to changes?

If updating a business rule requires development effort, testing, and deployment cycles, your system lacks flexibility.

Modern no code or low-code platforms allow teams to implement changes in minutes instead of days — reducing risk and improving responsiveness to business needs. - Where is your engineering time going?

If your team spends significant time maintaining ETL pipelines, fixing failures, or handling schema changes, that’s a hidden cost.

Instead of focusing on product innovation, engineers get stuck managing infrastructure. Reducing code-heavy pipelines can free up valuable engineering bandwidth. - Can your system scale with your growth?

If scaling your system requires re-architecture, your current setup is already a limitation.

A scalable architecture should handle increased data volume and traffic seamlessly — without requiring constant redesign or performance tuning.

If you recognize even a few of these challenges in your current system, it’s a strong signal that your data architecture needs modernization.

Plan Your real-time Migration ?

The payment service in this case study took the leap—and achieved results that exceeded their most optimistic projections. Your organization can too.

FAQ

Leave a Comment

Nagercoil Office

Miru IT Park, Vallankumaranvillai,

Nagercoil, Tamilnadu - 629 002.

Bangalore Office

Unit 303, Vanguard Rise,

5th Main, Konena Agrahara,

Old Airport Road, Bangalore - 560 017.

Call: +91 6383016411

Email: sales@mafiree.com

Orbit

Orbit

Xstreami

Xstreami